AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

Back to Blog

Cosine similarity3/1/2023

It was this post that started my investigation of this phenomenon. Similar analyses reveal that Lift, Jaccard Index and even the standard Euclidean metric can be viewed as different corrections to the dot product. The more I investigate it the more it looks like every relatedness measure around is just a different normalization of the inner product. Look at: “Patterns of Temporal Variation in Online Media” and “Fast time-series searching with scaling and shifting”.

Or if i just shift by padding zeros and then corr = -0.0588Īlso could we say that distance correlation (1-correlation) can be considered as norm_1 or norm_2 distance somehow? for example when we want to minimize the squared errors, usually we need to use euclidean distance, but could pearson’s correlation also be used?Īns last, OLSCoef(x,y) can be considered as scale invariant? is very correlated to cosine similarity which is not scale invariant (Pearson’s correlation is right?). then it doesn’t change anything.īut you doesn’t mean that if i shift the signal i will get the same correlation right?Įx: and, corr = 1īut if i cyclically shift and, corr = -1

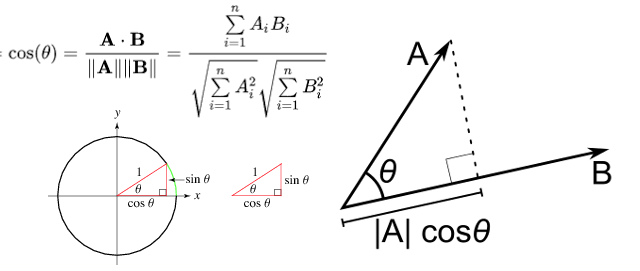

I guess you just mean if the x-axis is not 1 2 3 4 but 10 20 30 or 30 20 10. You say correlation is invariant of shifts. I have a few questions (i am pretty new to that field). “one-feature” or “one-covariate” might be most accurate.) In my experience, cosine similarity is talked about more often in text processing or machine learning contexts. (He calls it “two-variable regression”, but I think “one-variable regression” is a better term. I linked to a nice chapter in Tufte’s little 1974 book that he wrote before he went off and did all that visualization stuff. References: I use Hastie et al 2009, chapter 3 to look up linear regression, but it’s covered in zillions of other places. Īny other cool identities? Any corrections to the above? And there’s lots of work using LSH for cosine similarity e.g. I’ve heard Dhillon et al., NIPS 2011 applies LSH in a similar setting (but haven’t read it yet). 2010 glmnet paper talks about this in the context of coordinate descent text regression. One implication of all the inner product stuff is computational strategies to make it faster when there’s high-dimensional sparse data - the Friedman et al. \ NoĪre there any implications? I’ve been wondering for a while why cosine similarity tends to be so useful for natural language processing applications. One way to make it bounded between -1 and 1 is to divide by the vectors’ L2 norms, giving the cosine similarity If x tends to be high where y is also high, and low where y is low, the inner product will be high - the vectors are more similar. A basic similarity function is the inner product You have two vectors \(x\) and \(y\) and want to measure similarity between them. location and scale, or something like that). To implement it using Python, we can use the “cosine_similarity” method provided by scikit-Learn.Cosine similarity, Pearson correlations, and OLS coefficients can all be viewed as variants on the inner product - tweaked in different ways for centering and magnitude (i.e. Now, let’s see how to implement it using Python. I hope till now you must have understood that the concept behind Cosine Similarity is to calculate similarities between two documents. In this section below, I will walk you through how to calculate cosine similarity using Python. In machine learning applications, this technique is mainly used in recommendation systems to find the similarities between the description of two products so that we can recommend the most similar product to the user to provide a better user experience.

When the similarity score is one, the angle between two vectors is 0 and when the similarity score is 0, the angle between two vectors is 90 degrees. On the other hand, if the value of the similarity score between two vectors is 0, it means that there is no similarity between the two vectors. If the value of the similarity score between two vectors is 1, it means that there is a greater similarity between the two vectors. The range of similarities is between 0 and 1. It does this by calculating the similarity score between the vectors, which is done by finding the angles between them. Cosine similarity is used to find similarities between the two documents.

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed